Three Tests, One Breakthrough: Putting Value in Users’ Hands at Abide

How Mike Rotella found the Goldilocks zone: right moment + scale

Mike Rotella tested paymentless trials at Abide and got a 0.01% lift. Flat.

He pulled the test down but he couldn’t ignore the signal.

Mulling it over, the data showed that once a user started a trial, they stayed, so the subscription product itself wasn’t the issue — it was exceptional. The friction must be psychological - what could he do?

Mike leads product at Abide, a Christian meditation app with 6M+ downloads. I worked with him for five years on Citibank’s Online Banking team, so I’ve seen this pattern: when something isn’t working, he doesn’t abandon it, he reframes it, looking for the deeper insight that can unlock real user value.

👋 Hey, I’m Alex. I write Shipping on Fridays to explore the craft of how great products get built and what we can learn from the people behind them. I publish 1–2x a month on topics ranging from design sprints to how my sailing team a/b tests. This week I caught up with Mike to share the story of how he and his team tested their way to a breakthrough.

Setting the Scene: Playing on Hard Mode

Alex: First off, thanks for joining me, Mike. I’d love to hear a bit about Abide and the challenges you’re facing.

Mike: Yeah, so Abide is part of a nonprofit called Guidepost, and we’re a Christian meditation app. We’ve got about 8 billion elapsed minutes per year and a really passionate, loyal user base. But our big focus right now is new user growth—and honestly, we’re playing on hard mode.

Hard mode?

Since we launched, we’ve been the number one Christian meditation app. But since then, there’s been about 3 to 5 big players—all VC-backed with hundreds of millions of dollars. They’re outspending us on ads, and with AI making it easier than ever to build apps, the barrier to entry is gone. As we’re a nonprofit, we have to understand the game being played and how we can win at that game. So instead of buying growth, we have to earn it, by extracting more value from every install.

That sounds like a massive challenge. Where did you start?

We knew our Premium experience was already exceptional, top percentile in trial-to-paid conversion and retention. Once people experienced it, 7 out of 10 stayed.

So the question isn’t how do we improve Premium? It is: how do we get that value into users’ hands, without friction and at the right moment?

The Problem: A Hesitant Audience

A lot of our users are hesitant to put down a credit card. Half of our user base is over 60 years old. Some don’t even understand what a free trial is. Others have this aversion to paying for a faith-based service in the first place.

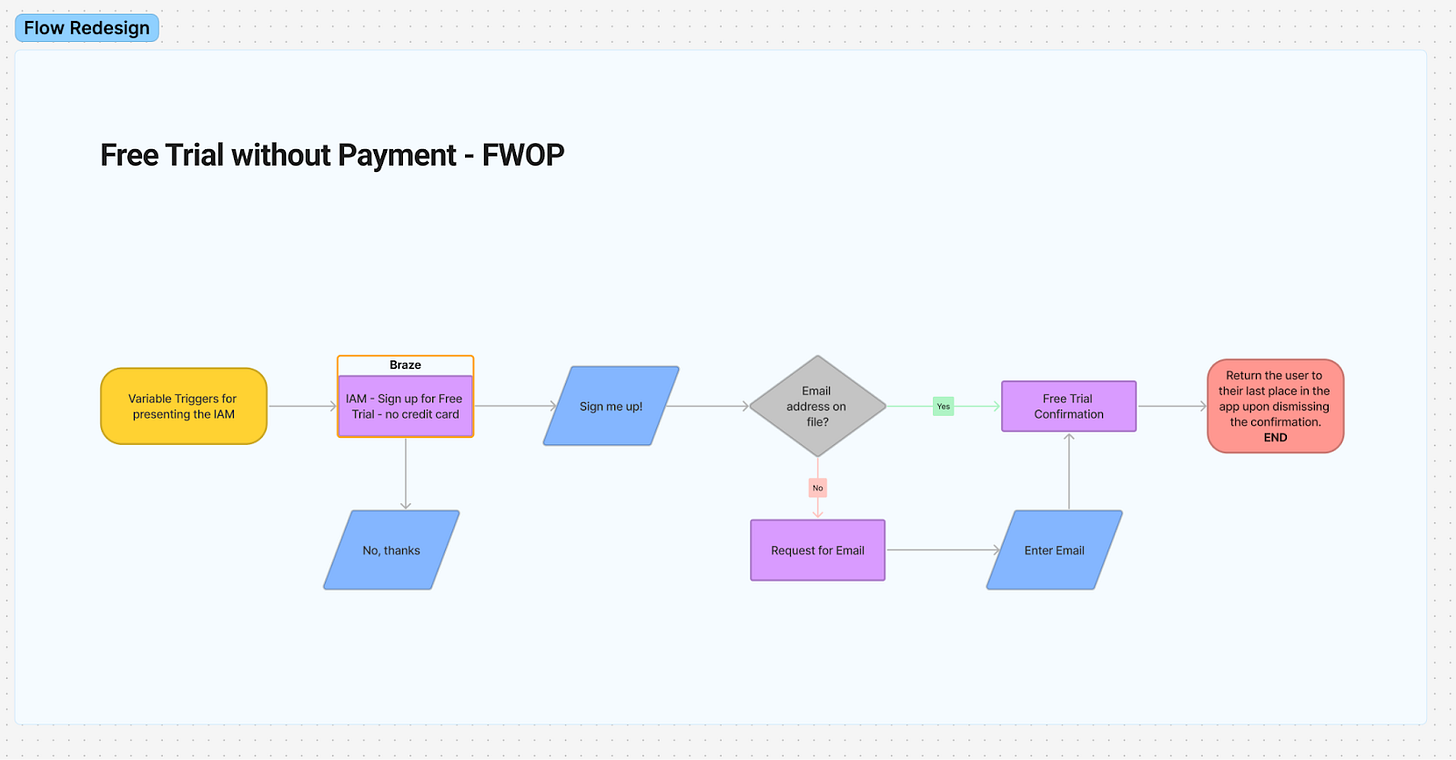

So we had this hypothesis: what if we removed the credit card requirement entirely? Give them 7 days of premium for free, no payment required upfront. On day 7, they’d have to subscribe to continue.

Paymentless free trials. That makes sense given your audience.

Exactly. And I was confident it would work. I mean, if our free-to-paid is that strong, even if half as many people convert, that’s still solid, right? Especially at onboarding—that’s where the majority of our revenue comes from.

So we ran the test in onboarding. A 50-50 test.

Bold move.

I felt so good about it. I thought we were about to juice our numbers. And then… it was basically flat. Like, 0.01% higher.

Oh man.

Test #1: The Onboarding Flop

Yeah, I was humbled. At first I’m thinking, “Wait, maybe my product’s not that great?” But then we realized the issue: we were asking people to commit before they had felt the value. People hadn’t experienced the product yet. They didn’t know what they were getting into.

So you took it down?

We took the test down and went back to control. But I didn’t give up on the concept. I still believed in it. The data told me that once people get into the product, it’s sticky. They just needed to feel that first.

After the onboarding test, we realized we had introduced unintended complexity by bypassing our standard account creation flow, which likely hurt performance. That insight led us to re-architect the experience so we could deploy it intentionally at any moment in the user journey.

er

Test #2: Playlists as the Gateway

So what did you do next?

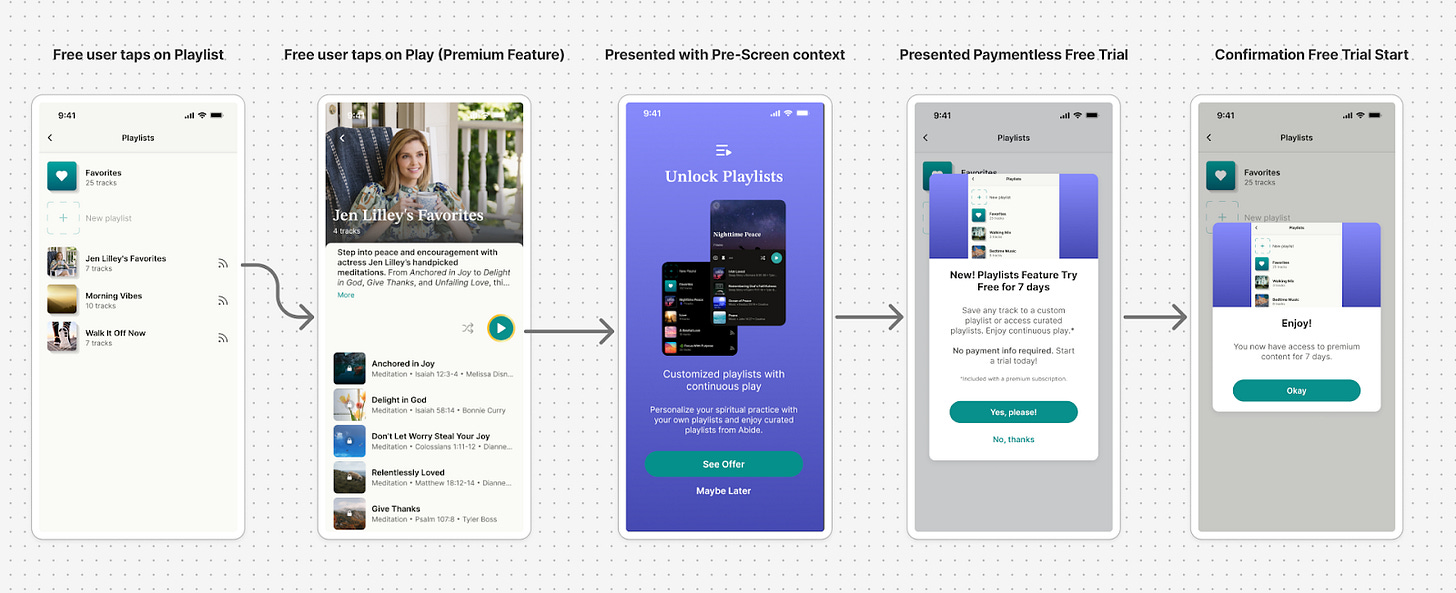

We had a new feature we wanted to build: playlists. This is an audio app—how do you not have playlists, right? Our users wanted to fall asleep to curated content that would play all night without interruption. It was a highly requested feature.

And we realized: this was something users clearly wanted…and it was meaningful enough to make Premium feel distinct.

Wait, so you made playlists a premium feature?

Sort of. For free users, when they hit the playlist feature, instead of showing them a traditional paywall, we let them unlock it by accepting a paymentless free trial.

So the second test was basically putting paymentless trials in front of a high-intent feature?

Exactly. These were users who had already experienced the app and were actively trying to do something specific. They had felt the value.

Now the trial wasn’t abstract — it was access to something they genuinely wanted. And you know what? It worked. Not a massive revenue driver because it’s a smaller cohort, but for those users, the conversion rate was strong. About 3 out of every 7 users who saw it converted.

That’s solid! But I’m guessing playlists alone wasn’t enough to move the business?

Right. It was impactful, but it was only a sliver of our acquisition and upgrades. We still needed subscriptions. Not everyone was making it to playlists, and we needed something with higher volume.

Test #3: Content Gating + The 4-Hour Window

So that brings us to the third test?

Yeah, this past September we made a major shift. We realized we were giving away so much that users couldn’t clearly see what made Premium special. So we shifted from having 90% of content free to making only about 10% free for new users.

Whoa. That’s a huge philosophical change for the business, especially for a nonprofit.

Very much. But we looked at apps like Calm and Headspace. Try finding 2-3 pieces of free content in your first 10 minutes. Everything is locked for a reason. We were in a season where we couldn’t just pump downloads to the App Store. We had to make the Premium experience unmistakable.

How hard was it to get buy-in from the nonprofit leaders?

The key was showing them the risk was minimal. We were getting 80-85% of our revenue in onboarding before this gated experience even kicked in. So we were only impacting the next 10% of users who converted in the first week, and the 5% long tail that happened over 90 days.

We started at 10% of new users, saw good traction, rolled it out to 25%, then 50%. Subscriptions went up 8-10% versus control. Not because we restricted access, but because we clarified the value. Free listening dropped ~30%, primarily among low-intent users — while revenue per new user increased materially.

So you had to decide: what’s a free user worth to us?

Exactly. And my CFO made it easy. He said, “How much are they worth to us, Mike?” When we did the math, it was clear. Turn it up.

So now all iOS users who skip upgrading on onboarding clearly see what’s included in Premium and what’s free.

And that’s when you brought back paymentless trials?

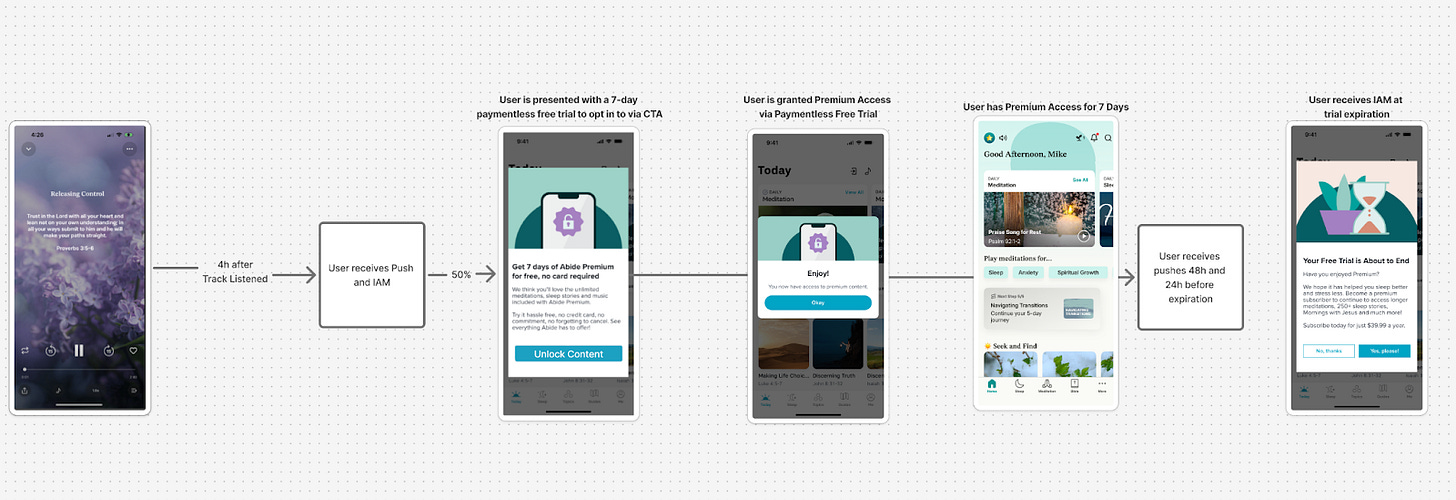

Two weeks ago, we shipped the third iteration. Now, 4 hours after someone’s last play, they get a push notification or in-app message saying: “Congrats, you’ve unlocked premium. No credit card required.”

It’s an unsolicited surprise and delight moment. But they’ve experienced the app, they’ve seen what’s Premium, and now when we offer frictionless access, it feels meaningful.

And how’s it performing?

Two weeks in, we’re up 6% in subscriptions versus the control at 25% of users. Early days, but it’s showing real signal.

What Made the Difference

So looking back, what made the third test work when the first two didn’t?

The offer didn’t change. The experience before the offer did. The first test failed because we asked too early—before people knew what they were getting. The second test worked because we asked at a high-intent moment, but the volume was too small. The third test combined the best of both: high volume and the right context.

By the time people get that notification, they’ve already:

Skipped onboarding and chosen not to pay

Created an account (which is now required)

Experienced the app

Hit the content limits and felt the friction

Now when we offer them a paymentless premium, it’s not abstract. They know what they’re unlocking.

So it’s not just about the feature—it’s about the timing and the user’s state of mind?

Exactly. It’s about respecting where they are in their journey. In onboarding, they don’t know us yet. At playlists, they’re invested but it’s a narrow use case. But 4 hours after they’ve been using the app and bumping into gates? That’s the sweet spot.

Lessons Learned: Don’t Give Up After One Test

Looking back on Mike’s journey, a few things stand out:

One flat test doesn’t mean the idea is wrong. The first test was flat not because paymentless trials were bad, but because the timing was wrong. Paymentless trials aren’t pricing mechanics, they’re trust mechanics. After value, they feel like a reward. Mike didn’t abandon it, he iterated to make it feel like a reward rather than an offer.

Volume matters, but so does context. The playlist test worked with the right users, but at a small scale. The third test proved the pattern:

Right user + wrong moment = flat test.

Right moment + wrong scale = niche win.

Right moment + scale = business impact.

Build on what you learn. Each test taught Mike something new. Test 1: too early, trust wasn’t there. Test 2: value first, high intent works. Test 3: combine volume with context and remove friction.

Sometimes you need to change the environment . Content gating created the conditions for paymentless trials to succeed. Without that friction and clarifying what was truly Premium, made frictionless access more meaningful and appealing

Too often in product, we run a test, see it’s flat, and move on. Mike’s story is a reminder that iteration isn’t just about tweaking variables—it’s about fundamentally rethinking where and when you’re asking the question.

Had a blast connecting with you Alex!